What building Docker taught Solomon Hykes about reliability, security, and community

Last updated

Containers are now the default with over 91% of organizations running them in production. Kubernetes sits on top with 85% using it and another 9% evaluating. Teams that are mostly or fully cloud native release multiple times a day at 37%. These are the operating conditions for today’s reliability work.

We met Solomon Hykes in Paris during KubeCon. Twelve years after the PyCon lightning talk and the Hacker News push that made Docker public sooner than planned. The story still matters. Adoption arrived before readiness. The outcomes shaped how teams think about security, observability, pipelines, and scale.

This piece draws out the hard lessons behind Docker’s rise and the ideas now shaping Dagger. The focus stays on what helps engineers ship faster with less chaos.

Tune into our full podcast here.

Watch the full podcast!

How has KubeCon Europe been for you?

Solomon

Great and very busy. It is in Paris and I am from Paris, so I have been reconnecting with a lot of old friends. It also feels like a ten-year checkpoint for the container and cloud native movement. There have been a lot of reunions and the whole week has had that energy.

How do you balance staying close to the ecosystem with your responsibilities at Dagger?

Solomon

Most of my time goes into Dagger and the Dagger community. We work in the open and keep it community first. We basically live on our Discord server and spend our days building with users. A lot of that community overlaps with the broader cloud native world, along with application developers and some data scientists, so there is constant cross-pollination. By focusing on Dagger I end up staying in touch with a lot of cloud native folks anyway.

Docker was started in 2008 as dotCloud, right?

Solomon

That is right. We started in France in 2008 as dotCloud, then moved to the Bay Area in 2010. We pivoted to Docker in 2013.

You first showed Docker at PyCon in 2013. What happened in that room?

Solomon

We had a lightning talk and showed an unreleased version of Docker. I expected a small side room with maybe thirty people. PyCon did lightning talks on the main stage, so there were several hundred people. The reaction was very different from what I was used to. People were excited. Someone posted our unfinished site to Hacker News and called it vaporware since it was not open source yet. We decided to ship in two weeks. We sprinted, open sourced it, and put it out as fast as we could.

With hindsight, is there anything you would have done differently during that early journey?

Solomon

I would have taken things less personally. There are many ups and downs in a startup and in an open source community. It is only code. I love building things and it is easy to identify with what you build, like when you make a Lego castle and someone says they do not like it. You get attached and you get upset. With more experience I learned to enjoy the positive parts and ride out the negative ones. Everyone is always upset about something on the internet. I complain about things too. I wish I had started with that mindset from the beginning because it is more fun.

When did it become clear that Docker would change how teams build and run software?

Solomon

Part of my brain always felt that the architecture was right, but I was young and had no idea what it meant to get the world to adopt something new. For years it was just hard to get anyone to care. Around the PyCon presentation something shifted. The reaction in the room was different. It surprised me because I had been working on containers for five years in different iterations. It was the same core idea, but this version clicked. Sometimes one change that seems small to you is the one that changes everything from the outside.

Now that Docker became the default for containers and every move was scrutinized, do you feel more free to innovate at Dagger?

Solomon

Yes. It is fun to work on a new project with a smaller team. We launched Dagger two years ago and have been building the community with users in the open. It started as a niche, then in the last six months adoption picked up and the community grew. It still feels human scale. People know each other. It reminds me of the early Docker days. You can move fast, try things, and see what resonates without the pressure of steering a battleship. I like moving fast and I like the early phase.

Docker went from a developer tool to running production workloads everywhere. How did you architect the Docker engine to handle that kind of scale?

Solomon

We did not at the beginning. We were trying things and got surprised by the speed of adoption of that prototype. Teams put it in production before we felt it was ready. We told people to wait and they ran it anyway because the benefits were strong for some workloads. We then spent years catching up to the community. We stabilized, scaled, and refactored as fast as we could. A good example is Docker build. The Dockerfile started as a simple experiment I added after launch. It was taped together and not in the original version. It became popular, so we had to fix it. Over time we hired great engineers and rebuilt that path. BuildKit became a redesigned backend for Docker build and made it robust.

When you are used by the Fortune 500, security becomes a top priority. What did you think about security during Docker’s growth?

Solomon

Security is always a balance of risks and tradeoffs. You cannot protect perfectly against every possible risk, so you decide what is acceptable and at what cost. Containers reshuffled that calculation for the security community. We introduced new variables and everyone had to figure out what it meant to secure applications and infrastructure in a containerized world. A lot of it was a conversation about acceptable risk, and people do not always agree since assessment is subjective. Over time the community matured and best practices settled. Just in time for AI to arrive and reshuffle things again.

The container landscape has many players now and real fragmentation. How did Docker navigate that and is there a similar vision for Dagger?

Solomon

Adoption was so fast that it was not clear how far it would go. We did not know if one hundred percent of applications would always run on Docker. When Kubernetes arrived the same question came up. Would everything run on Kubernetes or would Docker be for development and Kubernetes for production. The same uncertainty was there for the cloud. Would everything move to the cloud or would it be a mix. Now it is clear this is a fragmented world. There will not be one runtime that runs everything.

Fragmentation is accelerating because there is so much software across edge, data center, devices. Cloud and on-prem will both continue. Containers, VMs, WebAssembly, JavaScript isolates and whatever comes next will coexist. Even within containers there are many Kubernetes distributions and extensions. Docker’s job is to be a good steward of the community in its slice of the ecosystem. It is fine to coexist with other runtimes. Dagger was built around this insight. You cannot standardize on one runtime at large scale. We help you standardize on the pipeline that deploys to the runtime. If you are an enterprise you need to standardize something. If you can not standardize on Kubernetes or Docker or OpenShift or Lambda, you accept that you will deal with many targets. Our approach is to focus on a thin slice of the stack. The pipelines. The goal is to run any pipeline, ideally every pipeline.

How did observability evolve inside Docker and across the ecosystem?

Solomon

In the ecosystem there was a lot of innovation. Every segment of the infrastructure stack was reinvented around containers. When I started in systems we used Nagios to monitor servers. When we built our first container platform in the cloud before spinning it out as Docker we had problems in our small world. Containers were very dynamic and Nagios had to be configured by hand for servers. These were not servers. There were thousands of containers coming and going. We hacked Nagios so it could be dynamic again. That was generation one of observability for us. Now there are container-native observability tools that assume containers are dynamic. They start from that reality. It is nice to see that once containers existed you could build higher on top.

What is a problem observability tools still do not solve well?

Solomon

It is still hard to know what is going on in your system. There is a gap between the application world and the infrastructure world. Observability means different things to those groups. Unifying them would be ideal, but it is difficult, and the space is fragmented. That remains a challenge.

There are many users with different levels of experience. How did you think about making Docker accessible to beginners while still serving advanced teams?

Solomon

I care a lot about making technology accessible and simple. Making something simple is very hard, but it scales better because one team does the hard work and everyone benefits. At the start Docker was considered simple, yet if you used the first version today you would still find setup rough. On a Mac you had to install and configure a Linux virtual machine yourself, choose the distribution, SSH into it, install Docker, and then use Docker. Over time we invested a lot of design and engineering to remove that friction. Docker for Mac and Docker for Windows were big projects to make it click and run. The community design mattered too. The whale, the drawings, a friendlier tone. People told me they felt like they belonged, even as beginners. At the time many DevOps tools felt dark and exclusive. We wanted the opposite and that helped more people get started.

Docker Hub handles enormous traffic. How did you approach availability and scale there?

Solomon

It was a constant effort. The Hub existed from day one, although it was first called the Docker index. The point was to let the very first command be docker run something and have it work. We did not expect so many users. We had a chart in the office showing downloads and it kept climbing. Over the years we hit typical infrastructure bottlenecks and kept scrambling to keep up. At the end of the day it is hosting files, which is difficult at a very large scale but not as hard as hosting live applications, which is what we did before with our platform-as-a-service. We were happy to focus on scaling distribution of images instead of running customer apps in production.

What did disaster recovery look like for Docker Hub?

Solomon

Yes, there were strategies in place, and I am sure they are much more advanced now. To be honest I was not personally involved in that work at the time. We had an excellent infrastructure team and an engineering group that owned it. That layer was abstracted away from me, which I was happy about since I had done it in previous roles.

You are working on simplifying CI and CD for modern teams. What is the vision for Dagger and what is the team focused on now?

Solomon

The idea is to reduce the pain that every team feels in CI pipelines. Most pipelines are a pile of scripts and YAML that grow in complexity as the application grows. Someone ends up designated as the DevOps person and it becomes a burden. You cannot easily develop the pipeline locally. You push to a black box service and wait to find out if you made a mistake. If you treat the pipeline as software the experience is terrible. Dagger lets you take those scripts and YAML and replace them with clean code. You write small functions where each function is one task in the pipeline. Build, deploy, lint, anything. Dagger gives you a clean API for these functions and runs the containers for you. You compose them, you reuse them, and the pipeline becomes a set of pieces you can connect.

We heard you launched functions. Why are they important and how are people using them?

Solomon

Functions were the missing piece. We launched them last week after about six months of work. Because it is open source we built them with the community and many people started using them before we called them finished. The development experience was fun in a space that is rarely fun. Breaking a pipeline into small functions makes it easy to create one at a time and connect them. You can write in Go, Python, or TypeScript. You can reuse across languages. I might have my set of functions in Go and you might have yours in Python, and we can compose them into a common pipeline. CI stands for integration. You need a way to interconnect things. It feels like a Lego club where everyone brings their own pieces and we combine them to build something better.

From a reliability view, debugging CI pipelines is painful. How does Dagger help engineers monitor and troubleshoot?

Solomon

The main reason debugging is hard is the complexity. When you simplify and break the pipeline into small units of code you get the whole developer toolchain back. If your pipeline functions in Go or Python you use Go or Python debugging tools on those parts. When something breaks you know which function failed. You can run that function in isolation, mock dependencies, and iterate. Maybe it works with one version of a service and not another. You compare versions and fix it. Once the pipeline is code you get a real development loop. Developers are already good at debugging and they have better tools.

Any hard lessons from Docker that you apply at Dagger today?

Solomon

Yes. Do not try to make everyone happy. In open source anyone can ask for a feature or criticize the design. You have to stick to what you think is the right design while always listening to actual users. The distinction between users and bystanders is important. If a random person complains on the internet I do not optimize for that. If a user brings a problem, I care a lot. In the beginning at Docker I wanted every critic to be happy. That is not realistic. I would rather focus on users and keep shipping.

Share a nightmare from production that taught you something?

Solomon

Back when we ran dotCloud we hosted customer applications on top of EC2. It was the early days of AWS and they had outages. Sometimes there were load balancing problems. When they had issues we were stuck. Our customers would complain to us and we could not do much about the upstream. One time there was an AWS issue combined with a bug in our service. For a few minutes our router sent traffic to the wrong applications. People visited a website and saw another website. That was the worst production incident I remember. The postmortem was rich and we learned a lot from it.

What lesson from those years still sticks with you?

Solomon

Startups are an emotional roller coaster. When there is a problem it feels like the end of the world. I used to worry a lot about competitors. I told a friend some of those stories and realized I do not even remember many of their names. Most startups fail. If you are still around you are already winning in a way. I try not to worry about competition. I focus on users. You cannot care too much about users. Most of the features we are shipping now are things someone in the community asked for last month or last year.

Any parting advice for reliability leaders

Solomon

Bake ownership into deployments. Prefer explicit SLOs for user impact. Automate rollbacks. Generate post incident reports from the data you already collect. Treat time to engage like a first class metric along with MTTR. A lot of this work sits in the pipeline layer.

Closing thoughts

Solomon’s stories from Docker and Dagger remind us that adoption always runs ahead of readiness, reliability is a moving target, and simplicity is what scales.

If you want to dive deeper into the war stories and lessons we could not fit here, listen to the full Incidentally Reliable episode with Solomon Hykes.

👉 Listen to the full episode on Incidentally Reliable

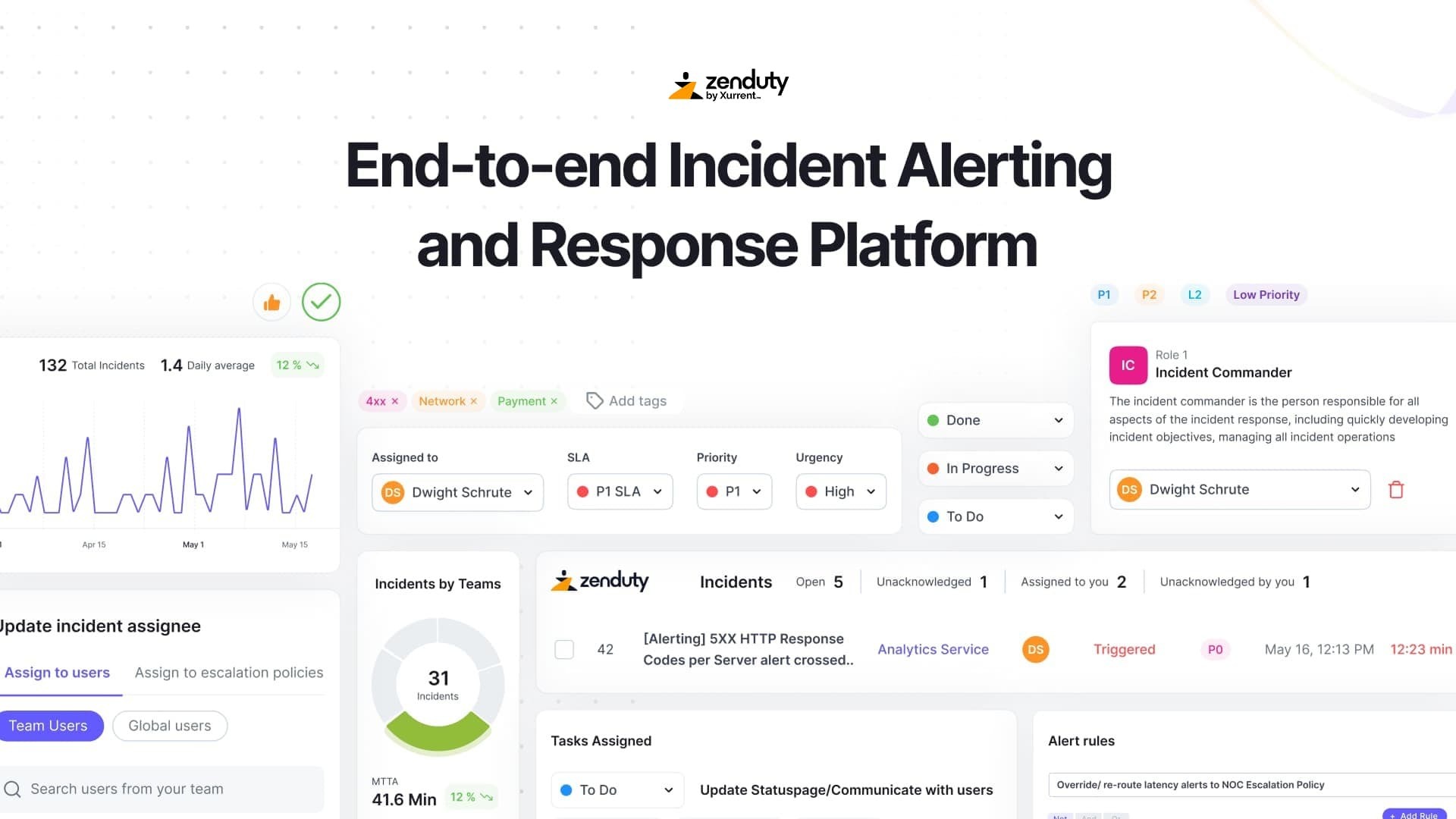

And if your team is looking to cut MTTR, reduce alert fatigue, and build more reliable operations, Zenduty can help.

✅ Start your free trial today and see how much smoother incident response can be.

SIGN UP FOR A FREE 14-DAY TRIAL | NO CC REQUIRED | AI-NATIVE INCIDENT MANAGEMENT

Rohan Taneja

Writing words that make tech less confusing.